MULTI-MODAL SYSTEM DESIGN

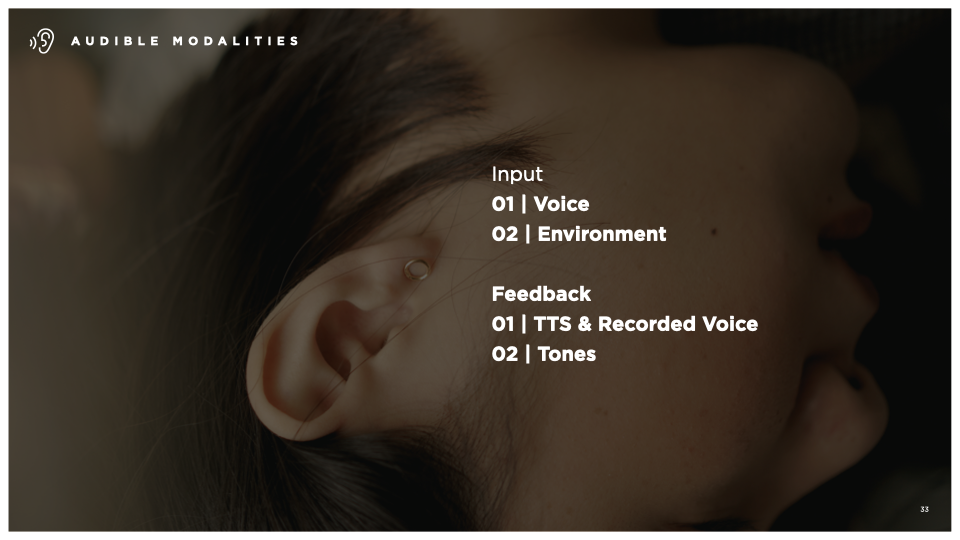

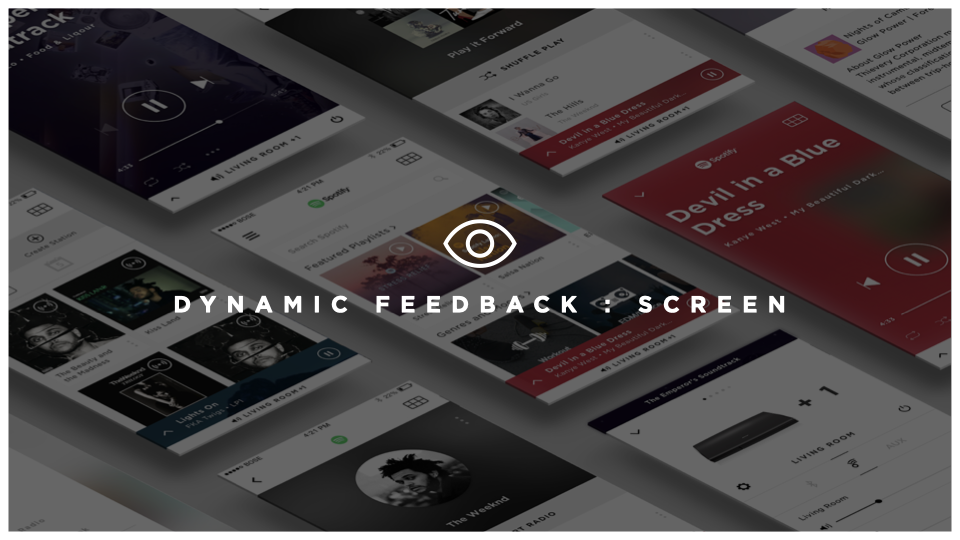

As interfaces evolve from computer-readable to human-readable, the rules of interaction design have to evolve with them. This project tackled the challenge of designing a cohesive multi-modal system for the Bose ecosystem — one that would ensure every product, regardless of its input method, feels unmistakably and consistently Bose.

The central insight driving the work: when a user buys a Bose product, it should act and react like one. That meant establishing a shared interaction language across touch, gesture, voice, and beyond — so that knowledge gained on one product transfers naturally to the next, building user confidence across the entire ecosystem.

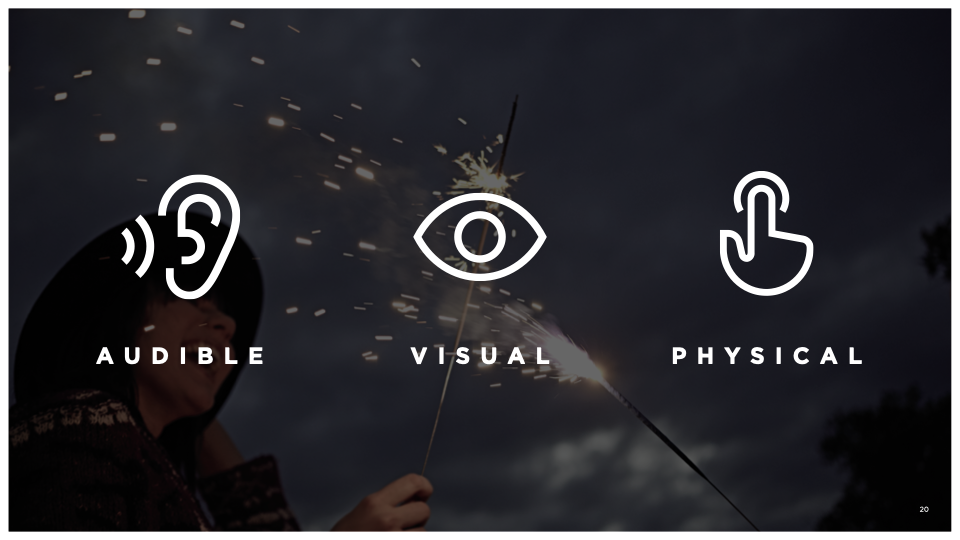

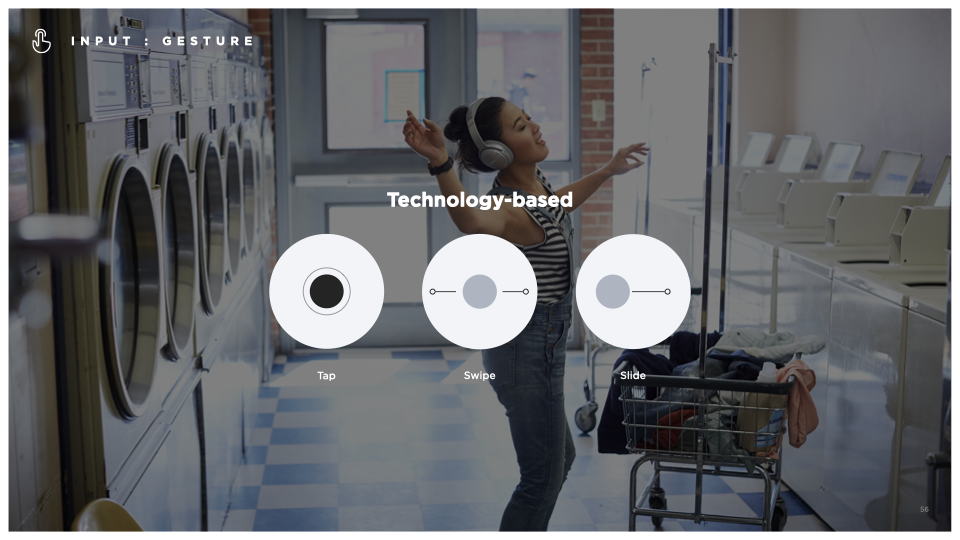

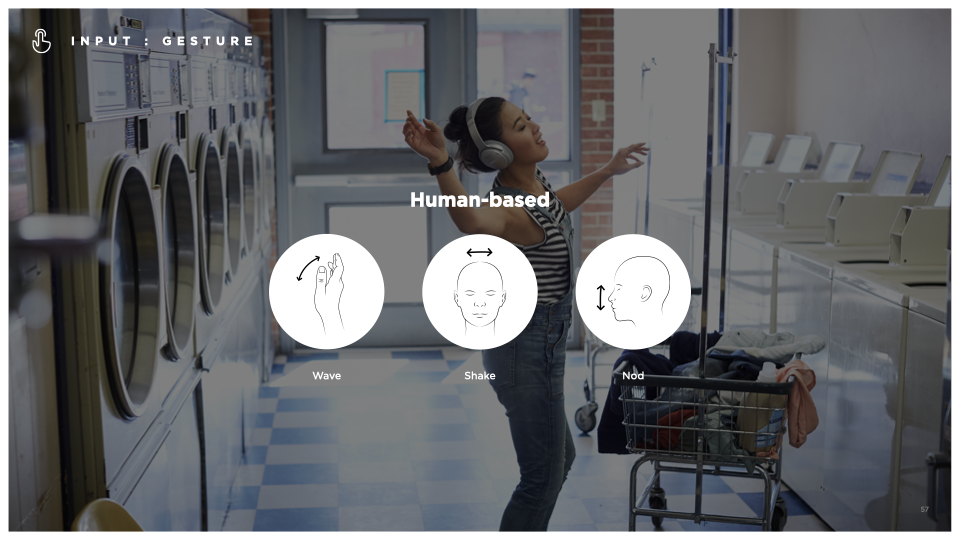

A core area of focus was gesture design. The work defined principles for making gestures intuitive and low-effort — favoring single-vector, short-duration interactions over complex multi-step ones, and grounding new gestures in familiar metaphors users already know, like a swipe to dismiss or a sliding scale for volume. Skeuomorphic references to prior learned behaviors were embraced deliberately, helping users make sense of new interaction methods without starting from scratch.

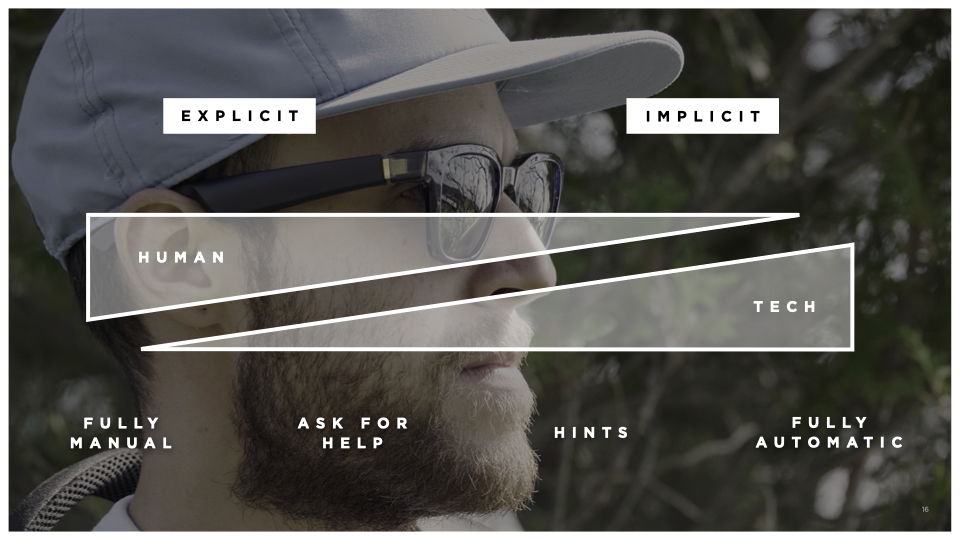

The system also accounted for human-based inputs — head nods, gaze detection, and conversational cues — pointing toward a future where interaction feels less like operating a device and more like a natural exchange. Throughout, the guiding principle was restraint: fewer gestures per surface, clearer hierarchy, and a consistent ecosystem-wide vocabulary that scales gracefully to new products over time.

Over time, out User Interfaces have evolved from computer-readable, to human-readable, making interactions more natural, and implicit.